kang's study

18일차 : 합성곱 신경망 본문

합성곱 신경망¶

패션 MNIST 데이터 불러오기¶

In [1]:

from tensorflow import keras

from sklearn.model_selection import train_test_split

(train_input, train_target), (test_input, test_target) = \

keras.datasets.fashion_mnist.load_data()

train_scaled = train_input.reshape(-1, 28, 28, 1) / 255.0

# 채널차원이 있다고 생각한다.

# 흑백이지만 차원하나를 더 추가해 3차원으로 만들어준다.

train_scaled, val_scaled, train_target, val_target = train_test_split(

train_scaled, train_target, test_size=0.2, random_state=42)

합성곱 신경망 만들기¶

In [2]:

model = keras.Sequential()

첫 번째 합성곱 층¶

In [3]:

model.add(keras.layers.Conv2D(32, kernel_size=3, activation='relu',

padding='same', input_shape=(28,28,1)))

# 커널의 채널은 입력 데이터의 채널을 동일하게 따라간다.

# 배치 차원은 지정하지 않는다. (훈련할 때 마다 달라질 수 있으므로 같이 넣지 않음)

In [4]:

model.add(keras.layers.MaxPooling2D(2))

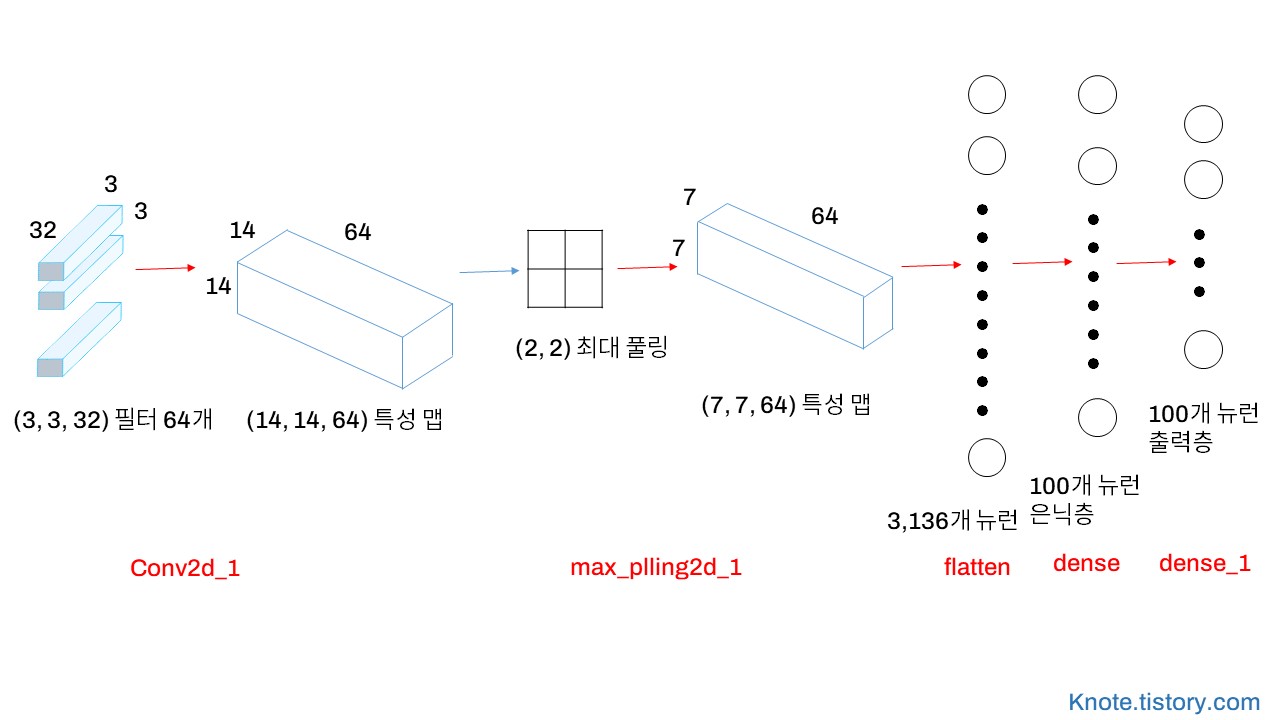

두 번째 합성곱 층 + 완전 연결 층¶

In [5]:

model.add(keras.layers.Conv2D(64, kernel_size=(3,3), activation='relu',

padding='same'))

# 채널의 개수를 더 늘려 특성맵을 더 많이 만들어 다양한 특징을 많이 잡아내려는 의도

model.add(keras.layers.MaxPooling2D(2))

In [6]:

model.add(keras.layers.Flatten())

model.add(keras.layers.Dense(100, activation='relu'))

model.add(keras.layers.Dropout(0.4))

model.add(keras.layers.Dense(10, activation='softmax'))

In [7]:

model.summary()

# 완전연결층은 쉽게 과대적합 되기 쉽다

# 합성곱은 적은 개수의 파라미터로도 이미지의 특징을 잘 잡아낸다.

합성곱 층 시각화¶

In [8]:

keras.utils.plot_model(model)

In [9]:

keras.utils.plot_model(model, show_shapes=True, to_file='cnn-architecture.png', dpi=300)

# show_shapes=True사용하면 input과 output의 크기를 보여준다.

모델 컴파일과 훈련¶

In [10]:

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy',

metrics='accuracy')

checkpoint_cb = keras.callbacks.ModelCheckpoint('best-cnn-model.h5',

save_best_only=True)

early_stopping_cb = keras.callbacks.EarlyStopping(patience=2,

restore_best_weights=True)

history = model.fit(train_scaled, train_target, epochs=20,

validation_data=(val_scaled, val_target),

callbacks=[checkpoint_cb, early_stopping_cb])

In [11]:

import matplotlib.pyplot as plt

In [12]:

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.xlabel('epoch')

plt.ylabel('loss')

plt.legend(['train', 'val'])

plt.show()

평가와 예측¶

In [13]:

model.evaluate(val_scaled, val_target)

# [손실, 예측]

Out[13]:

In [14]:

plt.imshow(val_scaled[0].reshape(28, 28), cmap='gray_r')

plt.show()

In [15]:

preds = model.predict(val_scaled[0:1])

# 마지막 덴스 층 (예측한 확률)

# 입력되는 값은 첫번째 배치차원이 있다고 생각한다. 슬라이싱 연산자를 쓰는 이유 1*28*28*1

print(preds)

# 9번 째 요소 가방 확인

In [16]:

plt.bar(range(1, 11), preds[0])

plt.xlabel('class')

plt.ylabel('prob.')

plt.show()

In [17]:

classes = ['티셔츠', '바지', '스웨터', '드레스', '코트',

'샌달', '셔츠', '스니커즈', '가방', '앵클 부츠']

In [18]:

import numpy as np

print(classes[np.argmax(preds)])

테스트 세트 점수¶

In [19]:

test_scaled = test_input.reshape(-1, 28, 28, 1) / 255.0

In [20]:

model.evaluate(test_scaled, test_target)

# 합성곱층, 풀링층, 드롭아웃층을 잘 찾아야한다.

Out[20]:

출처 : 박해선, 『혼자공부하는머신러닝+딥러닝』, 한빛미디어(2021), p422-441

'[학습 공간] > [혼공머신러닝]' 카테고리의 다른 글

| 20일차 : 순환 신경망 (0) | 2022.03.03 |

|---|---|

| 19일차 : 합성곱 신경망 시각화 (0) | 2022.03.03 |

| 17일차 : 신경망 모델 (0) | 2022.03.03 |

| 16일차 : 심층 신경망 (0) | 2022.03.03 |

| 15일차 : 인공신경망 ANN, Artificial neural network (0) | 2022.03.03 |

Comments